Tell us your deployment. We'll tell you what hardware to buy.

Describe your cameras, model, power, and environment. Get a recommended platform, sized infrastructure, bottleneck analysis, and a BOM your procurement team can use. Jetson, Coral, Hailo, RK3588 — vendor-neutral.

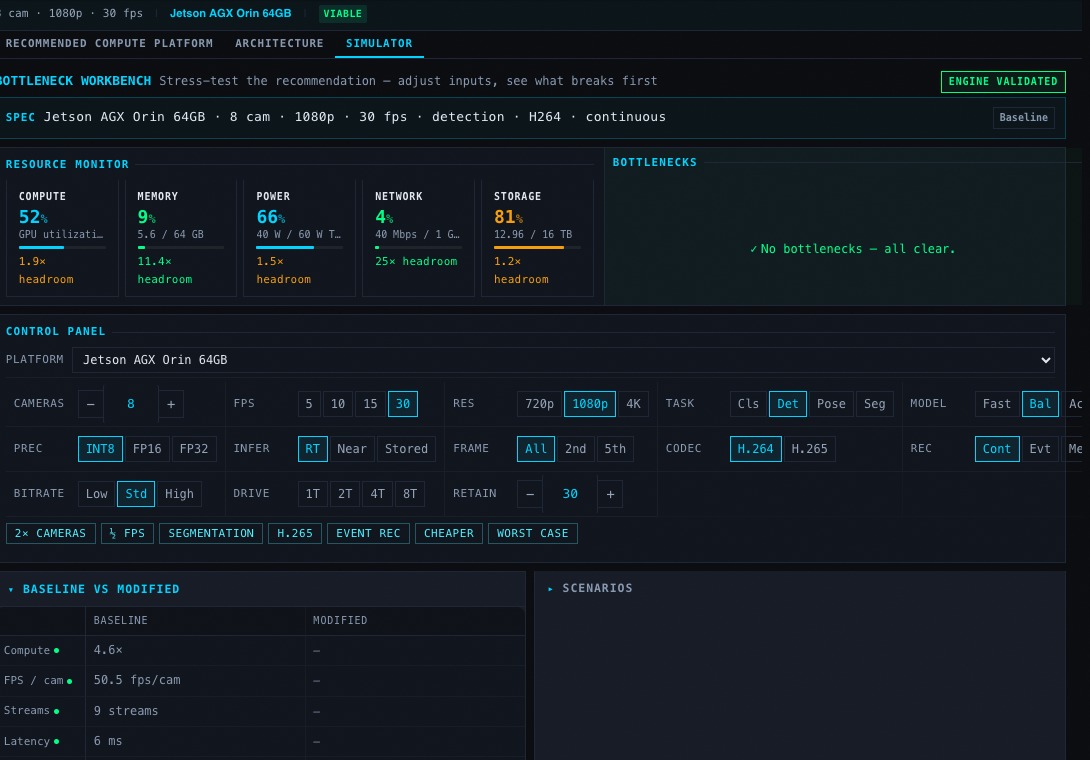

What breaks first when you

double the cameras?

After every recommendation, push the limits. Double cameras, switch codecs, change models — see compute, memory, power, and storage shift in real time. Find the bottleneck before you buy the hardware.

- Live compute, memory, power, network, and storage gauges

- One-click stress scenarios — 2× cameras, worst case, H.265

- Baseline vs modified comparison

- Engine-validated with confidence scoring

10 deterministic engines.

4-tier validation. Zero hallucination.

Every recommendation is computed by specialized sizing engines backed by source-attributed benchmarks and confidence-scored data. No LLM generates the numbers — engines are deterministic functions validated against 137 rules across 1,033 input combinations.

- Hardware Selector

- GPU Sizing & Stream Capacity

- Network Bandwidth

- Storage Endurance

- Power Budget & Module TDP

- Inference & Memory Estimator

- Deployment Cost (BOM)

- L1 — Individual engine unit tests

- L2 — Orchestrated engine validation

- L3 — Cross-engine consistency

- L4 — Architecture validation (AVE)

- 500+ validated benchmarks

- Source-attributed, confidence-scored

- 75 research topics completed

- 10-stage research-to-production pipeline

Any AI assistant can call these engines.

EdgeAIStack exposes all sizing engines as tool calls. MCP server for Claude, Cursor, and Windsurf. OpenAPI spec for GPTs and custom agents. One API call runs 8 engines in parallel and returns a complete deployment specification with confidence scores.

{

"tool": "design_system",

"input": {

"model": "yolov8n",

"camera_count": 8,

"resolution": "1080p",

"retention_days": 30,

"optimize_for": "balanced"

}

}

Still researching?

The engines give you answers. The guides give you context — Jetson power modes, storage endurance, PoE budgeting, thermal constraints, and deployment checklists. Start with the hardware guide or browse all 35+ engineering guides.